The surprise Babylon 5 HD remaster came out on Blu-Ray recently. Something I was curious about was how difficult it would be to combine the 4×3 Blu-Rays with the 16×9 DVDs to get a 16×9 pseudo-HD version of the show, with the edges upscaled but the center using real film.

I did a quick test on a frame from “Points of Departure,” and the answer is “not as easy as I hoped.” I’ve tried this technique before, matching and blending the same film from two different releases (and, apparently, two different digital scans), and I’ve also run into this problem of the film not scanning perfectly flat, and having some amount of distortion. I’d have to do further research to see if there are tools to address this that I have access to,1It feels like a combination of technologies should be able to solve this; I’ve got two slightly different sources of the same ground-truth. The HD frame shows exactly how 3/4th of the SD frame should look after it’s upscaled, so it should be possible to have a computer program compare the two, adjust the color, size, rotation, and proportion of the SD frame to match the HD one as closely as possible, apply some ML upscaling (ideally using the very shot that’s being upscaled as training data so the computer can extrapolate the lost detail at the sides of the frame from the existing detail in the middle), and blend the two sources. I know all these features exist independently, but I have no idea how to get them all into one tool. There might also be some complications with the DVDs needing to be detelecined and having scanline issues in the raw data. so this is just a quick test and comparison using Photoshop. The vertical framing of the DVD and Blu-Ray don’t match exactly, and the DVD’s colors are a little more contrasty, so I had to make some adjustments to match better.

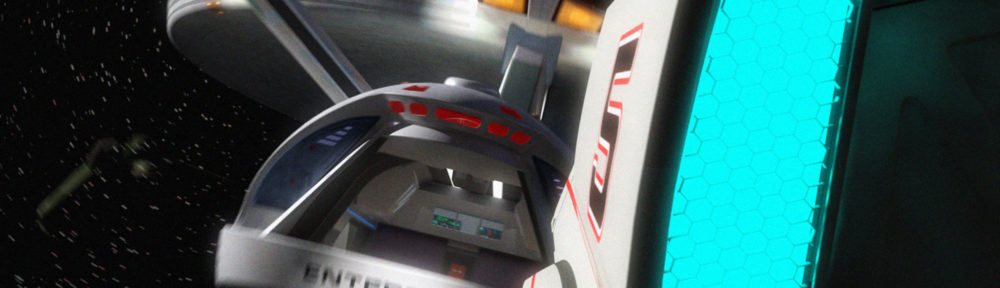

First off, the test image merging the two sources. I upscaled the DVD frame using an AI tool, color-corrected it as described above, and added some grain, while softening the border between the HD frame and the widescreen one:

It could be worse!

Continue reading

| ↑1 | It feels like a combination of technologies should be able to solve this; I’ve got two slightly different sources of the same ground-truth. The HD frame shows exactly how 3/4th of the SD frame should look after it’s upscaled, so it should be possible to have a computer program compare the two, adjust the color, size, rotation, and proportion of the SD frame to match the HD one as closely as possible, apply some ML upscaling (ideally using the very shot that’s being upscaled as training data so the computer can extrapolate the lost detail at the sides of the frame from the existing detail in the middle), and blend the two sources. I know all these features exist independently, but I have no idea how to get them all into one tool. There might also be some complications with the DVDs needing to be detelecined and having scanline issues in the raw data. |

|---|